|

Inference at the edge of ambition.

Nine nodes. Eleven GPUs. Five architecture generations. Free AI compute for the communities that need it most.

Data Hub

Aggregate metrics across the ECO-Foundry local cluster.

Cluster Architecture

Nine local nodes connected via Cat 8 Ethernet with elastic burst capacity on the cloud.

H100, H200, A100, B200, GB300

Elastic burst capacity

Multi-archBlackwell

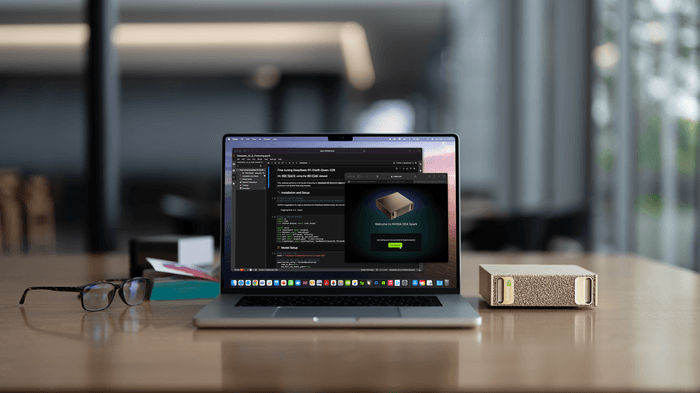

DGX Spark · GB10 Grace Blackwell

128 GB Unified · 1 PFLOPS

Blackwell + GraceAmpere

RTX 3080 Ti

12 GB GDDR6X

Ampere GA102RTX 3080 Ti

12 GB GDDR6X

Ampere GA102RTX 3080 Ti

12 GB GDDR6X

Ampere GA102Turing

RTX 2080 Ti

11 GB GDDR6

Turing TU102Pascal

2x TITAN Xp

24 GB GDDR5X

Pascal GP102GTX 1080

8 GB GDDR5X

Pascal GP104GTX 1080

8 GB GDDR5X

Pascal GP104Apple Silicon

M4 Max 40-core

64 GB Unified

Apple Silicon 3nmECO-Foundry

Energy-conscious, always-on compute serving local communities. Nine nodes spanning five architecture generations, open for community access.

Feynman

NVIDIA DGX Spark · Grace Blackwell

“What I cannot create, I do not understand.”

NVFP4 · FP8 · INT8 · BF16 · FP16 · TF32 · FP32

Laplace

NVIDIA GeForce RTX 3080 Ti

“The weight of evidence for an extraordinary claim must be proportioned to its strangeness.”

Euler

NVIDIA GeForce RTX 3080 Ti

“Read Euler, read Euler, he is the master of us all.”

Riemann

NVIDIA GeForce RTX 3080 Ti

“If only I had the theorems! Then I should find the proofs easily enough.”

Noether

Dual NVIDIA TITAN Xp

“My methods are really methods of working and thinking; this is why they have crept in everywhere anonymously.”

Dirac

NVIDIA GeForce RTX 2080 Ti

“The aim of science is to make difficult things understandable in a simpler way.”

Gauss

NVIDIA GeForce GTX 1080

“Mathematics is the queen of the sciences, and number theory is the queen of mathematics.”

Planck

NVIDIA GeForce GTX 1080

“Science cannot solve the ultimate mystery of nature. And that is because we ourselves are a part of the mystery.”

Turing

Apple M4 Max · MacBook Pro

“The sciences do not try to explain, they hardly even try to interpret, they mainly make models.”

Exo-Scale

Elastic GPU capacity via the cloud. When community projects need more than the local cluster can provide, we scale to the cloud.

GPU Cloud

The Superintelligence Cloud

Provider

Cloud Partner

GPU Fleet

H100, H200, A100, B200, GB300

Instances

1x to 8x GPU

Clusters

16 to 2,000+ GPUs

Software

PyTorch, CUDA, cuDNN

Status

Active

Network

Interconnect topology across the ECO-Foundry and affiliated networks.

Cat 8 Ethernet

40 Gbps rated

S/FTP, 7 Gbps effective

eero Max 7

WiFi 7

Tri-band, 4.3 Gbps

Fiber Uplink

1 Gbps symmetric

UCLA eduroam

WPA2-Enterprise

Internet2, 802.1x

DGX Spark

200 Gbps

2x QSFP

LADWP Power

LADWP

1.75 kW peak GPU draw

Built for Impact

Purpose-built infrastructure powering free AI tools for local communities.

Community Inference

Free inference for community partners. Models up to 200B parameters on Feynman. 128 GB unified memory, 1.5+ PFLOPS cluster peak. No API limits, no rate caps, no barriers.

Applied AI Deployment

From climate models to educational tools. Local fine-tuning on the ECO-Foundry, cloud burst via Exo-Scale for larger workloads. We help partners go from idea to deployed model.

Open Compute

52,992 GPU cores across nine nodes available to nonprofits, educators, and community developers. Always available. No cloud dependency.